Calculating a Maclaurin series

Categories: maclaurin series taylor series

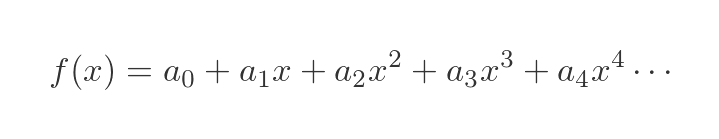

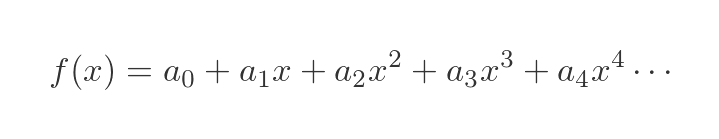

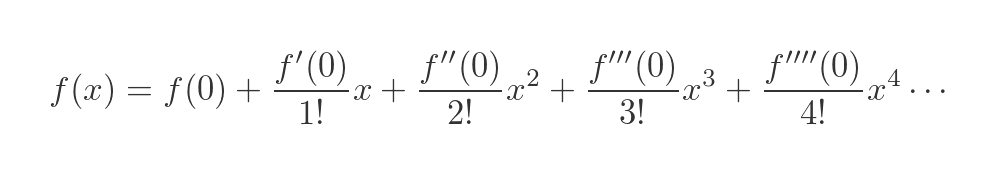

A Maclaurin series allows us to calculate the approximate value of a function f(x) as a polynomial:

The values a0, a1, a2 ... can be calculated in terms of the derivatives of the function at x = 0:

In this formula:

- f(0) is the value of the function for x = 0.

- f'(0) is the value of the first derivative function for x = 0.

- f''(0) is the value of the second derivative function for x = 0.

- f'''(0) is the value of the third derivative function for x = 0.

- And so on.

The method can be applied to many common functions, for example the exponential function ex, the natural logarithm ln(x), sine and cosine functions, hyperbolic sine and cosine functions, and many others.

The result is often a polynomial with an infinite number of terms. In many common cases, the terms get smaller very quickly for higher powers of x, so it is possible to calculate a value to any required accuracy by calculating the values of a sufficient number of terms.

The Maclaurin series approximates the value of the function at x = 0. It also works well for values of x that are close to zero. For values of x that are far away from zero, it is generally necessary to calculate more terms to obtain the required accuracy.

A Taylor series, which is a generalisation of the Maclaurin series, can be used to calculate accurate values of f(x) when x has a value other than zero. That will be covered in a later article.

How the Maclaurin series works

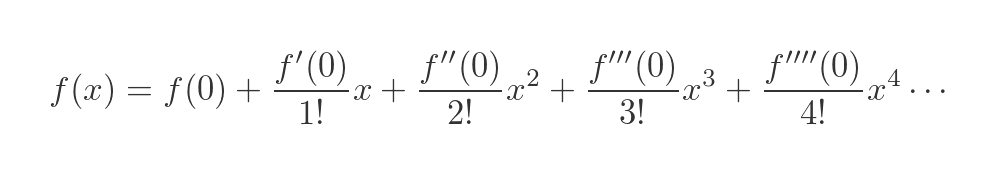

We are trying to express some function f(x) as a polynomial of the form given above. We aim to make this approximation as accurate as possible for values of x that are close to 0.

We will start by assuming that it is possible to find such a series.

We can then apply a process to find the values of a0, a1 etc.

Finally, we can verify that the method works for a particular f(x) by creating a graph of the polynomial, and comparing it to a graph of tf(x).

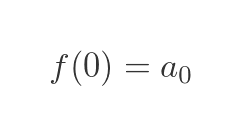

Step 1 - finding the value of a0

Given the approximation we defined above:

We can determine the value of a0 quite easily. If we set x to zero, all the terms in x go to zero, so we are left with:

So the value of a0 is simply f(0).

Step 2: finding the value of a1

Looking again at the original formula:

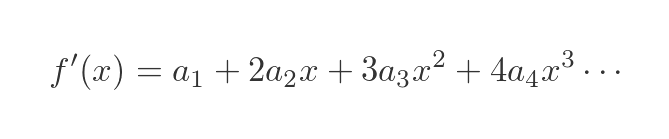

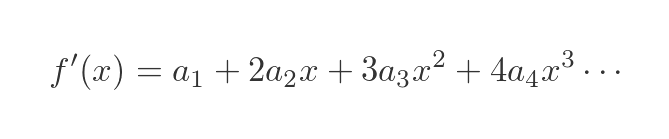

How can we find the value of a1? We can do this by differentiating each side. Since f(x) and the infinite polynomial are supposed to be the same function, it follows that the first derivatives should also be equal.

We have just used the power rule to differentiate the RHS of the equation:

- The derivative of a0 is zero, so the term in a0 disappears.

- The derivative of a1x is a1.

- The derivative of a2x2 is 2 a2x.

- The derivative of a3x3 is 3 a3x2.

- And so on.

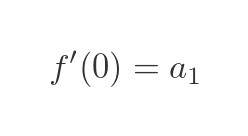

As before, we set x to zero. all the terms in x go to zero, so we are left with:

This tells us the a1 is equal to the first derivative of f(x) for x = 0.

Step 3 - finding the value of a2

Here is the previous formula, where we differentiated each side:

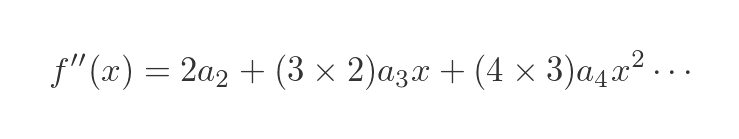

Now we would like to find the value of a2? We could try differentiating each side again since it worked quite well last time. The same logic applies - since f(x) and the infinite polynomial are the same function, it follows that the second derivative should also be equal.

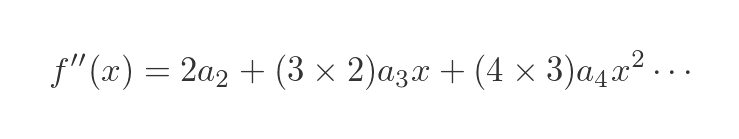

We use the power rule to differentiate the RHS of the equation, but this time we need to take into account the factors from the previous time:

- The derivative of a1 is zero, so the term in a1 disappears.

- The derivative of 2 a2x is 2 a2.

- The derivative of 3 a3x2 is (2 × 3) a3x.

- And so on.

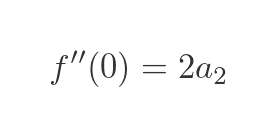

Now we can set x to zero again, and once again all the terms in x will be zero, so we have:

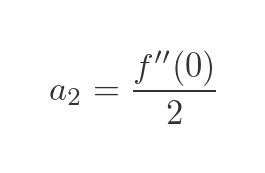

Which gives us this value for a2:

Step 4 - finding the value of a3

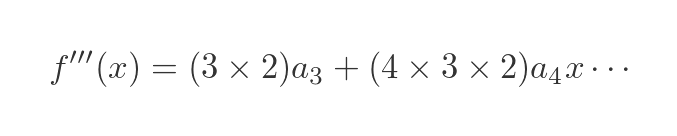

To find the value of a3 we just need to repeat the previous procedure.

Taking the previous equation:

Differentiating once more gives:

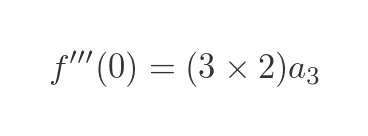

When x = 0 we have

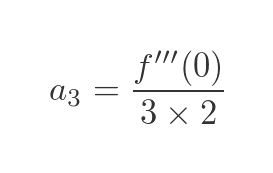

Which gives us this value for a3:

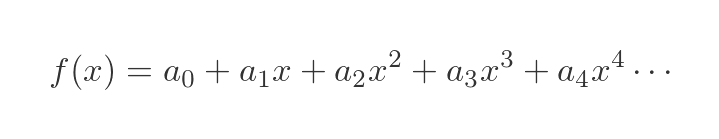

General formula for a Maclaurin series

There is a pattern here that we can use to extend the series to any number of terms. The nth term is equal to the nth derivative of f(x) divided by a factor. We need to know how to calculate the factor.

a3 has a divisor of 6 because we differentiated the term 3 times, first as a cube (giving a factor of 3), then as a square (giving an extra factor of 2), then as a term in x (giving an extra factor of 1, which has no effect).

The divisor is therefore (3 × 2 × 1), which is 3 factorial.

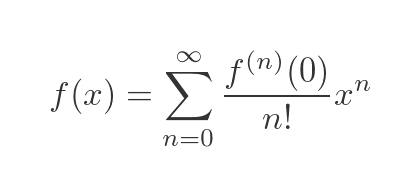

For a4, there is an extra step of differentiating the power of 4, which gives an extra factor of 4. So the divisor for a4 is 4 factorial. For a5 the divisor is 5 factorial, and so on. So we can write the general series as:

This can be written as a sum, using sigma notation:

There are a couple of things to note about this equation:

- f(n) means the nth derivative.

- f(0) therefore means the zeroeth derivative, which is simply the function itself, f.

- 0 factorial is defined to have a value of 1.

Related articles

Join the GraphicMaths Newsletter

Sign up using this form to receive an email when new content is added to the graphpicmaths or pythoninformer websites:

Popular tags

adder adjacency matrix alu and gate angle answers area argand diagram binary maths cardioid cartesian equation chain rule chord circle cofactor combinations complex modulus complex numbers complex polygon complex power complex root cosh cosine cosine rule countable cpu cube decagon demorgans law derivative determinant diagonal differential equation directrix dodecagon e eigenvalue eigenvector einstein ellipse equilateral triangle erf function euclid euler eulers formula eulers identity exercises exponent exponential exterior angle first principles flip-flop focus gabriels horn galileo gamma function gaussian distribution gradient graph hendecagon heptagon heron hexagon hilbert horizontal hyperbola hyperbolic function hyperbolic functions infinity integration integration by parts integration by substitution interior angle inverse function inverse hyperbolic function inverse matrix irrational irrational number irregular polygon isomorphic graph isosceles trapezium isosceles triangle kite koch curve l system lhopitals rule limit line integral locus logarithm maclaurin series major axis matrix matrix algebra mean minor axis n choose r nand gate net newton raphson method nonagon nor gate normal normal distribution not gate octagon or gate parabola parallelogram parametric equation pentagon perimeter permutation matrix permutations pi pi function polar coordinates polynomial power probability probability distribution product rule proof pythagoras proof quadrilateral questions quotient rule radians radius rectangle regular polygon rhombus root sech segment set set-reset flip-flop simpsons rule sine sine rule sinh slope sloping lines solving equations solving triangles special relativity speed of light square square root squeeze theorem standard curves standard deviation star polygon statistics straight line graphs surface of revolution symmetry tangent tanh transformation transformations translation trapezium triangle turtle graphics uncountable variance vertical volume volume of revolution xnor gate xor gate